Updating smart manual databases is crucial for organizations managing information, ensuring accuracy, relevance, performance, security, and compliance with evolving industry standards.

Regular updates maintain data integrity and support informed decision-making processes within the system, benefiting overall operational efficiency.

The Importance of Regular Updates

Regular updates to a smart manual database are paramount for sustained functionality and reliability. Maintaining an up-to-date database ensures the information it contains remains accurate and relevant to current organizational needs. This proactive approach directly impacts decision-making, allowing for informed strategies based on dependable data.

Furthermore, consistent updates bolster database performance, enhance security protocols against emerging threats, and guarantee adherence to evolving industry standards. Neglecting updates can lead to data inconsistencies, system errors, and potential compliance issues, ultimately hindering operational efficiency and increasing risk. A well-maintained database is a cornerstone of a successful organization.

Defining “Smart” Manual Databases

“Smart” manual databases, while relying on human input, incorporate features that enhance data management beyond traditional methods. These databases often leverage unique identifiers, like external IDs, to facilitate updates from external systems, such as Salesforce. This allows for efficient upsert operations – updating existing records or inserting new ones – without needing the Salesforce record’s internal ID.

The “smart” aspect lies in the database’s ability to intelligently recognize and process incoming data, grouping new and existing records for optimized DML (Data Manipulation Language) processing. This capability streamlines updates and minimizes potential errors, improving overall data integrity.

Backup and Preparation

Prior to any update, a comprehensive backup is essential, including securing the backup location and considering database deletion and recreation for a clean start.

Database Backup Procedures

Database backup procedures are paramount before initiating any update process. Utilizing SQL Server Management Studio, back up the SMART TeamWorks 4 Server database, ensuring the backup is saved to a secure, designated location.

This safeguard protects against unforeseen issues during the update. A full backup captures the entire database, allowing for complete restoration if necessary. Regularly scheduled backups, in addition to pre-update backups, are highly recommended. Document the backup process meticulously, including the date, time, and location of each backup, for efficient recovery and audit trails.

Creating a Secure Backup Location

Creating a secure backup location is vital for protecting your database against data loss. This location should be physically separate from the server hosting the SMART TeamWorks 4 Server database, mitigating risks from hardware failures or site-specific disasters.

Consider utilizing network-attached storage (NAS), cloud storage services with robust security measures, or a dedicated backup server. Implement access controls, restricting access to authorized personnel only. Regularly verify the integrity of backups stored in this location to ensure recoverability when needed. Encryption adds an extra layer of security.

Database Deletion and Recreation

Database deletion and recreation is a preparatory step before applying updates, ensuring a clean environment. Back up the existing database thoroughly before proceeding. Delete the current database instance within SQL Server Management Studio. Subsequently, recreate a new, empty database with the same name and collation settings as the original.

This process eliminates potential conflicts arising from outdated schema or data structures. Following recreation, execute the `update database.sql` script to populate the new database with the updated schema and initial data, preparing it for the SMART TeamWorks 4 Server application.

Update Methods & Techniques

Update methods include SQL scripts like `update database.sql`, leveraging external IDs for Salesforce integration, and utilizing upsert operations for efficient DML grouping.

Using Update Scripts (SQL Server Example)

Employing update scripts, particularly within a SQL Server environment, provides a structured and repeatable method for database modifications. Begin by backing up your SMART TeamWorks 4 Server database using SQL Server Management Studio, ensuring a recovery point. Subsequently, delete the existing database and recreate it.

Then, navigate to the RMS Database Scripts-Update folder and execute the `update database.sql` script using SQL Management Studio, targeting the newly created SMART TeamWorks 4 Server database. This script systematically applies necessary changes, maintaining data integrity and consistency throughout the update process.

The `update database.sql` Script

The `update database.sql` script serves as a central component in maintaining the SMART TeamWorks 4 Server database. This script, executed within SQL Server Management Studio, contains a series of SQL commands designed to modify the database schema and data. It addresses updates related to functionality, performance, and security enhancements.

Careful execution of this script is vital; it systematically alters the database structure, ensuring compatibility with the latest SMART Practice Aids versions; Always verify a successful backup before running the script, allowing for rollback if unforeseen issues arise during the update process.

Understanding External IDs in Salesforce Updates

External IDs within Salesforce are crucial for updating records originating from external systems without needing the Salesforce record’s ID. By designating a field as an external ID, Salesforce can accurately identify existing records during an upsert operation. This functionality is particularly useful when integrating with smart manual databases, where external systems are the source of truth.

Salesforce intelligently groups new and existing records based on the external ID, optimizing Data Manipulation Language (DML) operations and improving update efficiency. Utilizing this argument streamlines synchronization and ensures data consistency.

Upsert Operations and DML Grouping

Upsert operations in Salesforce are fundamental when synchronizing data from external sources, like a smart manual database. They efficiently handle both updates to existing records and insertions of new ones in a single process. Salesforce demonstrates intelligence by automatically grouping new records together and existing records separately before executing the DML operations.

This grouping significantly optimizes performance, reducing the number of DML statements required. This is especially beneficial when dealing with large datasets from external systems, ensuring faster and more efficient data integration and maintenance.

Salesforce Specific Updates

Salesforce updates leveraging the externalId argument allow updating records originating from external systems without needing the Salesforce record’s unique ID.

Utilizing the `externalId` Argument

The optional externalId argument within Salesforce is a powerful tool for updating records from external systems. It instructs Salesforce to identify records based on a specified field marked with the “External ID” checkbox. This is particularly useful when you lack the Salesforce record’s native ID but possess a unique identifier from the originating system.

Salesforce intelligently groups new records together and existing records together before performing Data Manipulation Language (DML) operations, optimizing the update process. Utilizing externalId enables efficient upsert operations, seamlessly updating existing records or creating new ones as needed, streamlining data synchronization.

Updating Records from External Systems

Successfully updating records from external systems relies on leveraging unique identifiers. Employing the externalId argument in Salesforce allows for seamless synchronization, even without knowing the Salesforce record ID. This method is vital when data originates from sources outside the Salesforce ecosystem, ensuring consistency across platforms.

By matching external IDs, Salesforce can accurately identify existing records for updates or create new ones if no match is found. This process minimizes data duplication and maintains data integrity, streamlining workflows and improving overall data management efficiency.

Recognizing New vs. Existing Records

Salesforce intelligently distinguishes between new and existing records during upsert operations. Utilizing the optional externalId argument is key; Salesforce examines the specified field—marked with the external ID checkbox—to determine record status; This functionality is particularly useful when integrating data from external systems where Salesforce IDs are unavailable.

The system efficiently groups new records together and existing records separately before executing Data Manipulation Language (DML) operations, optimizing performance. This smart grouping ensures updates are applied correctly, avoiding unintended record creation or modification.

SMART Practice Aids Updates

SMART Practice Aids recognizes steps via row insertion mirroring existing properties; dragging blank lines may cause non-recognition, hindering update functionality.

Correct Methods for Inserting Steps

To ensure SMART Practice Aids accurately recognizes new steps within your manual database, employing the correct insertion method is paramount. The system functions optimally when a new row is inserted with properties identical to an existing step. This mirroring of attributes allows the software to definitively identify the new entry as a valid step during subsequent processing.

Conversely, avoid methods like dragging a blank line into position. These alternative approaches frequently result in the system failing to recognize the row as a legitimate step, preventing its inclusion in updates performed via the ‘Update SMART Engagement’ option. Consistent application of the correct method guarantees data integrity and seamless functionality.

Avoiding Non-Recognized Step Rows

Preventing the creation of non-recognized step rows is vital for maintaining a functional and accurate SMART Practice Aids database. Utilizing improper insertion techniques, such as simply dragging a blank line into the step sequence, frequently leads to these problematic entries. The system relies on specific row properties to identify valid steps.

When a row lacks these defining characteristics, SMART Practice Aids will not acknowledge it during updates, particularly when utilizing the ‘Update SMART Engagement’ feature. Consistently employing the recommended method – inserting a row mirroring existing step attributes – ensures all steps are correctly recognized and processed, preserving data integrity.

The `Update SMART Engagement` Option

The ‘Update SMART Engagement’ option within SMART Practice Aids provides a streamlined method for propagating changes made to step rows throughout the system. However, its effectiveness hinges on the correct insertion of those steps. This feature relies on recognizing rows with specific, pre-defined properties to identify them as valid steps within an engagement.

Rows inserted using incorrect methods – like dragging blank lines – will be ignored by this option, preventing the update from applying to those entries. Therefore, consistently using the recommended insertion technique, duplicating existing step row properties, is crucial for a successful and comprehensive update.

Smartctl Manual Updates

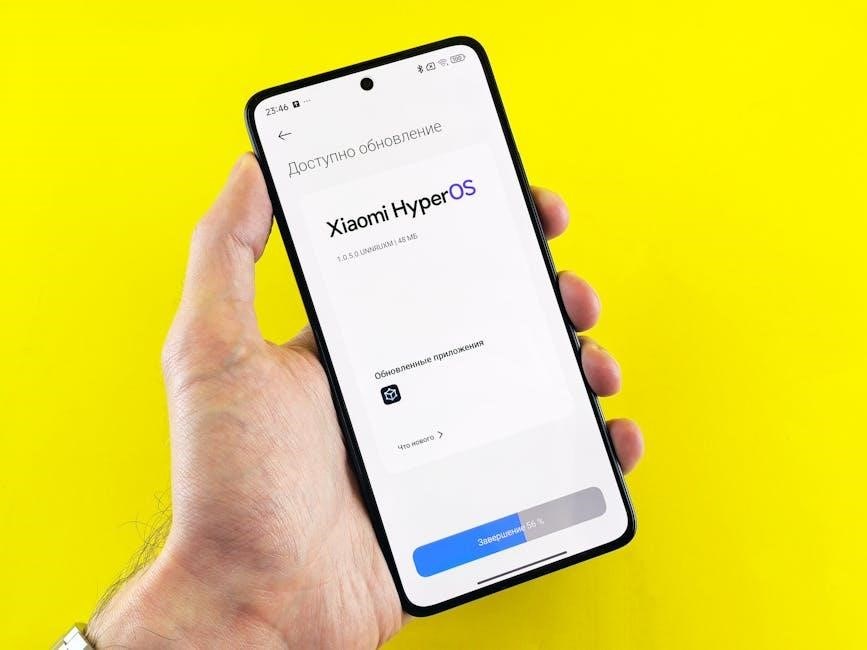

Smartctl can be manually updated; currently, a user has version 6.12.10 and seeks to upgrade to version 7, requiring a deliberate update process.

Checking Current Smartctl Version

Before initiating any manual update, verifying the currently installed smartctl version is paramount. This establishes a baseline and confirms the necessity of the update. Users can determine their existing version through command-line interface (CLI) execution.

Simply typing “smartctl –version” into the terminal will display the installed version number, such as 6.12.10. Knowing this initial version allows for a targeted update to the desired release, like version 7. Accurate version identification prevents potential compatibility issues and ensures a smooth update process.

Methods for Manual Smartctl Updates

Manual smartctl updates typically involve downloading the latest version from a trusted source and replacing the existing binaries. This often requires administrative privileges. Package managers, specific to the operating system (like apt on Debian/Ubuntu or yum on CentOS/RHEL), can streamline the process.

Alternatively, compiling from source code provides maximum control but demands technical expertise. After installation, verifying the update by re-running “smartctl –version” is crucial. Ensure the new version number is displayed, confirming a successful manual update of the smartctl utility.

Data Integrity and Validation

Post-update validation is essential to confirm data accuracy and resolve conflicts, ensuring the database remains reliable and trustworthy after modifications are applied.

Post-Update Data Validation

Following any database update, rigorous data validation is paramount. This process confirms the accuracy and completeness of the information, identifying any discrepancies introduced during the update. Validation should include checks against known data sets, verification of data types, and confirmation of referential integrity.

Automated scripts can assist in this process, flagging potential issues for manual review. Thorough validation minimizes the risk of errors impacting downstream processes and ensures the continued reliability of the smart manual database. It’s a critical step in maintaining data quality.

Ensuring Data Accuracy

Maintaining data accuracy post-update requires a multi-faceted approach. Implement checks to verify that updated fields contain valid values and conform to defined business rules. Compare updated records with pre-update backups to identify unintended changes. Focus on critical data elements directly impacted by the update process.

Leverage data profiling tools to uncover anomalies and inconsistencies. Regularly audit data quality and establish clear procedures for correcting inaccuracies. Accurate data is the foundation of reliable insights and informed decision-making within the smart manual database.

Addressing Data Conflicts

Data conflicts arise during updates when changes from multiple sources collide. Prioritize conflict resolution strategies based on business rules – last-write-wins, source precedence, or manual review. Implement robust logging to track all conflicts and resolutions for auditability.

Utilize version control to revert to previous states if necessary. Establish clear ownership for data elements to define responsibility for conflict resolution. Proactive data validation and standardization minimize conflicts. Thorough testing of update processes identifies potential conflict scenarios before they impact the smart manual database.

Performance Considerations

Database updates impact performance; optimize processes through efficient scripting, indexing, and monitoring. Regularly assess and adjust update schedules to minimize disruption.

Impact of Updates on Database Performance

Database updates, while essential, inevitably affect system performance. Large-scale updates, particularly those involving extensive data modification or indexing, can lead to increased resource consumption, slower query response times, and potential locking issues. Linear scanning of records during upsert operations, even with Salesforce’s grouping optimization, can still introduce performance bottlenecks.

The impact is magnified in systems with high transaction volumes or limited hardware resources. Careful planning and execution are vital to mitigate these effects. Monitoring performance metrics before, during, and after updates is crucial for identifying and addressing any degradation.

Optimizing Update Processes

To minimize performance impact, optimize update processes through several strategies. Employing DML grouping, as Salesforce does with upserts, significantly reduces the number of database transactions. Utilizing efficient SQL scripts, like the `update database.sql` script for SMART TeamWorks 4 Server, streamlines modifications.

Batch processing, where updates are performed in smaller, manageable chunks, prevents overwhelming the system. Index optimization and regular database maintenance further enhance performance. Thorough testing in a non-production environment is crucial before deploying updates to a live system, ensuring a smooth and efficient process.

Monitoring Performance After Updates

Post-update performance monitoring is vital to identify any regressions or bottlenecks. Key metrics to track include query response times, transaction throughput, and resource utilization (CPU, memory, disk I/O). Regularly review database logs for errors or slow-running queries.

Utilize database performance monitoring tools to establish baselines and detect anomalies. Compare performance metrics before and after the update to quantify improvements or identify areas needing further optimization. Proactive monitoring ensures the database continues to operate efficiently and reliably following modifications.

Security Implications

Prioritize security best practices during updates, protecting sensitive data and ensuring compliance with industry standards to mitigate potential vulnerabilities.

Security Best Practices During Updates

Implementing robust security measures during database updates is paramount. Always restrict access to the update process to authorized personnel only, utilizing strong authentication protocols. Before applying any changes, thoroughly validate update scripts to prevent malicious code injection.

Encrypt sensitive data both in transit and at rest. Regularly audit update logs for suspicious activity. Maintain a detailed record of all changes made, including who made them and when. Employ a rollback plan to quickly revert to a previous state if issues arise.

Ensure the backup location is secure and protected from unauthorized access. Regularly review and update security protocols to address emerging threats.

Protecting Sensitive Data

Safeguarding sensitive data during smart manual database updates requires a multi-layered approach. Implement data masking or anonymization techniques when working with non-production environments. Utilize encryption for all sensitive fields, both during storage and transmission.

Strict access controls are essential; limit data visibility to only those with a legitimate need-to-know. Regularly audit data access logs to identify and investigate any unauthorized attempts.

Ensure compliance with relevant data privacy regulations, such as GDPR or HIPAA. Implement robust data loss prevention (DLP) measures to prevent accidental or malicious data breaches.

Compliance with Industry Standards

Maintaining compliance with industry standards is paramount during smart manual database updates. Adherence to regulations like GDPR, HIPAA, or PCI DSS dictates specific data handling procedures. Thorough documentation of all update processes, including data lineage and access controls, is crucial for auditability.

Regularly review and update database configurations to align with evolving compliance requirements. Implement data retention policies that comply with legal and regulatory obligations.

Ensure that all update activities are conducted in a secure and controlled manner, minimizing the risk of data breaches or non-compliance penalties.

Troubleshooting Update Issues

Common update errors require debugging scripts and rollback procedures. Identifying the root cause swiftly minimizes downtime and data inconsistencies during database maintenance.

Common Update Errors and Solutions

Encountering errors during database updates is inevitable. A frequent issue arises from script syntax errors within SQL, demanding careful review and correction in SQL Server Management Studio. Data type mismatches between the source and target fields also cause failures; ensure compatibility before execution.

Connectivity problems to Salesforce, or external systems, can interrupt the process. Verify network access and API credentials. DML limitations, like exceeding governor limits, necessitate optimizing update processes through bulkification and DML grouping.

Finally, external ID conflicts can prevent accurate record matching. Thoroughly validate external IDs for uniqueness and consistency to avoid unexpected results and maintain data integrity.

Debugging Update Scripts

Effective debugging of update scripts is vital for successful database maintenance. Begin by utilizing logging within your SQL scripts to track execution flow and identify error points. SQL Server Management Studio offers debugging tools for step-by-step execution and variable inspection.

For Salesforce updates, leverage the Salesforce Developer Console’s debug logs to monitor DML operations and pinpoint failures related to governor limits or data validation rules.

Isolate problematic sections of code by commenting out portions of the script and re-running. Thoroughly test scripts in a sandbox environment before deploying to production to minimize risks.

Rollback Procedures

Robust rollback procedures are essential for mitigating risks during database updates. Prior to any update, create a full database backup – this is your primary safety net. For SQL Server, utilize transaction control (BEGIN TRANSACTION, COMMIT, ROLLBACK) within your update scripts to allow for granular rollbacks of failed operations.

In Salesforce, consider using asynchronous processing and error handling to capture failed records and prevent widespread data corruption. Document a clear rollback plan outlining steps to restore the database to its pre-update state, including estimated downtime.

Future-Proofing Your Database

Planning for future updates, scalability, and adapting to changing needs are vital for long-term database health and sustained performance within evolving systems.

Planning for Future Updates

Proactive planning is essential for seamless future database updates. Anticipate evolving data requirements and system integrations. Regularly assess the database schema and identify potential areas for optimization or expansion. Document all update procedures meticulously, including rollback strategies, to mitigate risks.

Establish a schedule for routine maintenance and updates, considering the impact on users and system performance. Invest in robust backup and recovery mechanisms to safeguard against data loss. Prioritize security best practices throughout the planning process, ensuring data integrity and compliance.

Scalability and Growth

As data volumes increase, ensure your database can handle the load without performance degradation. Consider strategies like database sharding or partitioning to distribute data across multiple servers. Regularly monitor resource utilization – CPU, memory, and storage – to identify bottlenecks.

Implement indexing strategies to optimize query performance as the database grows. Choose a database system that supports horizontal scaling, allowing you to add more resources as needed. Plan for future integrations with other systems and ensure the database architecture can accommodate them efficiently.

Adapting to Changing Needs

Business requirements evolve, demanding flexibility from your database. Design a schema that allows for easy modification and extension without disrupting existing functionality. Embrace agile update processes, enabling rapid response to new data sources or reporting demands.

Regularly review data models to ensure they accurately reflect current business processes. Consider using a database system that supports schema evolution and versioning. Prioritize modularity in update scripts to minimize the impact of changes and facilitate easier rollback procedures if necessary.